Above: Scene from the movie ‘Chappie’ (2015), directed by Neill Blomkamp

One of the most famous artificial intelligence (AI) entities in modern popular culture was arguably the HAL 9000 computer in the modern classic ‘2001- A Space Odyssey’; the insider joke being that when we shift all letters by one to the right in the alphabet, ‘HAL’ reads ‘IBM’. While HAL was creepy and evil, viciously attempting to kill the spaceship’s crew, we have in the meantime happily accepted the first wave of AI without much suspicion. Apple’s SIRI, Microsoft’s CORTANA and Facebook’s ‘M’ (the latter is still in development, but watch out for it) present the latest generation of commercialized AI in the form of friendly personal assistants. Who wouldn’t like to have a digital servant at their disposal?

CORTANA, for example, is courteous and friendly and diligently sends complex user profiling data back to her master, in this case Microsoft. Information-delivering loyalty is no different for the other mentioned models. AI comes with the programmed, built-in agenda to make profit for their owners, obviously. The only convincing solution to create a truly private assistant would be the development of local AI. Speech recognition and machine learning have made tremendous leaps in usability over the past decade. But why is the humble PC sitting on my desk still as uninspiring as a rock? Why don’t I believe anything that SIRI says? My personal and disappointing experience with AI came in the form of a car navigation system which had sent me in continuous loops around the city – with the effect of missing my flight. Then again, how do we define the ambiguous term of ‘intelligence’?

A well-known procedure to test ‘machine intelligence’ is the Turing Test, which has inspired generations of science fiction writers. The Turing Test was designed, to dispel a common myth, not as a test to prove of whether computers can or cannot think. The Turing test has been designed to instruct computer to lie (we may also say ‘to fake’ or ‘make-believe’) in such a manner that a human dialogue-partner cannot tell the difference of whether the conversation partner is human or machine. The Turing test is a test of performance, not a test to prove if or how machines are capable of mental states.

The claim that in the very near future computers will be capable of consciousness is one of the most fascinating public debates. When will we become obsolete? When will the Terminator knock at our door? Looking at my home computer, probably not anytime soon. Followers of ‘Transhumanism‘ and advocates of strong AI (which is the label for the idea of emerging self-conscious machines, or ‘h+’ in short), such as one of their most prominent speakers, Ray Kurzweil, cite two key arguments to why the end of humanity as we know it is inescapable and nigh. Stephen Hawking believes in the inevitable advent of strong AI as well.

Pro Singularity: The Complexity-Threshold Argument and the Reverse-Engineering Argument

Firstly, it is argued that the performance of massive parallel computing increases exponentially. This is why, at some stage, consciousness may spring into existence once a certain threshold of complexity can be achieved. A single neuron cannot create consciousness, but billions of neurons can, which is the analogy being drawn. Secondly, by reverse-engineering the human brain, software can simulate precisely the same functions as neuronal networks. It is therefore anticipated to be only a matter of time when ‘singularity’, the advent of machine consciousness, arrives. If it does, so transhumanists conclude, biological intelligence becomes obsolete and we will eventually be replaced by the ‘next big thing’ of evolution, the ‘h+’. So much for cheerful prospects.

The Simulation-Reality Argument

One of the most ardent critics to this claim is Yale computer scientist David Gelernter. For Gelernter, to start with, simulations are not realities. We may, e.g., simulate the process of photosynthesis in a software-program while de facto no real photosynthesis has taken place. Computers, so Gelernter, are simply made out of the wrong stuff. No matter how sophisticated or complex a software-program simulates a process, it cannot transform actual carbon-dioxide into sugar and oxygen. We can simulate the weather, but nobody gets wet. We can simulate the brain, but no mind emerges.The underlying argument states that digital-, quantum- and biological modes of computation encompass fundamentally different types of causation and therefore cannot be substituted for one another. Consciousness, so Gelernter’s conclusion, is an emergent biological property of the brain.

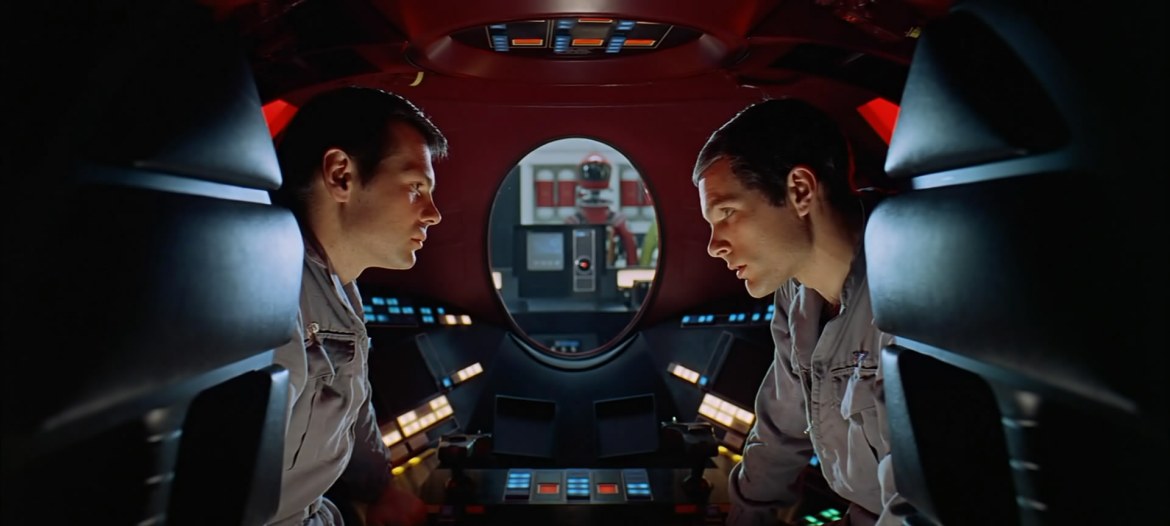

Above: Big Brother is watching you. In Stanley Kubrick’s ‘2001 – A Space Odyssey’ (1968) this was the legendary HAL 9000.

The Mind-Brain Unity Argument

Brains develop organically over an entire lifetime. Our minds, as emergent properties of the brain, are intrinsically linked to the unique structure of neural pathways. The brain is not simply ‘hardware’, it is the physical embodiment of life-long leaning processes. This is why we cannot ‘upload’ a mind into a computer – we cannot separate the mind from its brain. For the same reason we cannot run several minds on the same brain – like we run several programs on a single computer. There is only one mind per brain and it is not portable.

The Psychological Goal-Setting Argument (Ajzen-Vygotsky Hypothesis)

Besides the obvious physical differences between brains and computers, cognitive differences could not be greater. AI developer Stephen Wolfram argues that the ability to set goals is an intrinsic human ability. Software can only execute those objectives that it was programmed and designed to. An AI cannot meaningfully set goals for itself or others. The reason for this, so Wolfram, is that goals are defined by our particulars—our particular biology, our particular psychology and particular cultural history. These are domains that machines have no access to or understanding of. One could also argue in reverse: because human life develops and grows within social scaffolding (a concept developed by psychologist Lev Vygotsky, the founder of a theory of human cultural and bio-social development), deeply embedded in semantics, it is experienced as meaningful, which is a necessary prior condition to define goals and purpose. This would be the psychological extension to Wolfram’s argument.

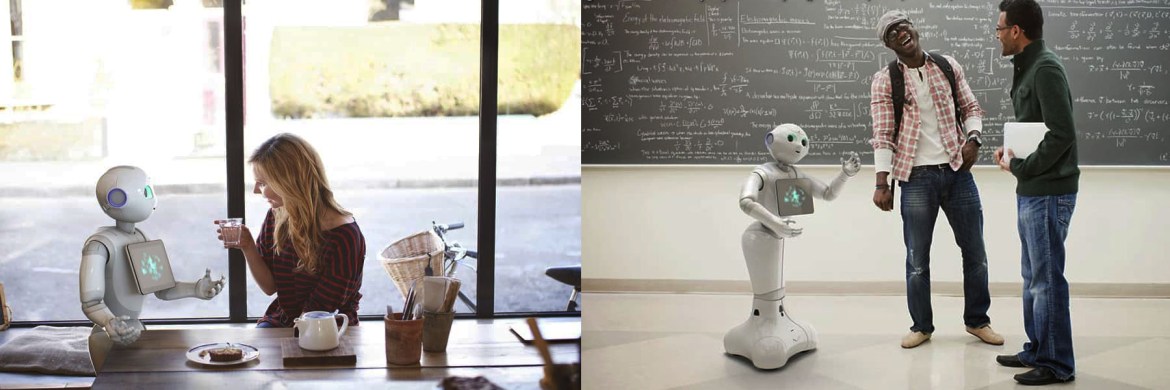

Above: The lovable Japanese service-robot Pepper recognizes a person’s emotional states and is programmed to be kind, to dance and entertain. Is the idea of AI-driven robots as sweet, helpful assistants necessarily bad? Is is easy to see that the idea could be reversed (imagine military robots), giving weight to Isaac Asimov’s ‘Three Laws of Robotics’: (1) A robot may not injure a human being or, through inaction, allow a human being to come to harm. (2) A robot must obey orders given it by human beings except where such orders would conflict with the First Law. (3) A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Setting goals also depends on a person’s attitude and underlying subjective norms in order to form intentions. If we would expect machines to set goals, they would not only be required to understand socially-embedded semantics, but to be able to develop attitudes and a subjective model of desirable outcomes. This requirement has been extensively researched in the Theory of Planned Behavior (TPB) by Icek Ajzen. We may coin the hypothesized inability of an AI to set meaningful goals the ‘Ajzen-Vygotsky hypothesis‘. The bar is set even higher when we consider including not only individual planning, which could be arbitrary, but the ability to consensual and cooperative goal-setting.

Arguing for Weak AI instead

What machines unequivocally do get better at is pattern-recognition, such as the ability to analyze our habits (e.g., which type of products or restaurants we prefer), speech recognition or reading emotional states, such as by webcam facial analysis or by measuring the heartbeat of fitness-wristbands. AI is getting better at assembling and updating profiles of us and at responding to profile-changes accordingly, which is a novel, interactive quality of modern IT.

In our role as eager social network users, we continuously feed AI the required raw material, which is precious user data. Higher-level interaction based on refined profiles can be very useful. AI can, e.g., assist us via single voice command, rendering the use of multiple applications obsolete. AI can manage application for us in the background while we focus on the task at hand. On the darker side, AI may compare our profile and actions to those of others, without our knowledge and consent for strategic purpose, which represents a more dystopian possibility (or already-established NSA practice).

Machine Learning is not an Easy Task when there is Little Data Available and Environments are Complex – Another Argument for Weak AI

Above: Many car manufacturers work currently on developing self-driving cars. Here Mercedes’ concept study, the F 015, ‘Luxury in Motion’. Non-car companies such as Google and Apple have joined the race.

If it looks like a dog, barks like a dog and behaves like a dog, the probability is high that we are indeed dealing with a dog and not a hyena or a goat, since a single low-pixel camera-input may deceive the AI.

Above: Systems can be deterministic, but non-computable

The Non-Computational Pattern Argument

An intriguing argument against strong AI was formulated by Sir Roger Penrose, which can be reformulated in the context of mind-environment interaction. Penrose demonstrates in his lecture “Consciousness and the foundations of physics” how a system could be fully deterministic, ruled by the logic of cause and effect, and still be non-computational. It is possible to define a set of a simple mathematical rules for the creation of intersecting polyomino whose sequence is output as a unique, non-repeating and unpredictable pattern. There is no algorithm, so Penrose’ argument, that can describe the evolving pattern.

My immediate question was how this thought-experiment is any different from how we learn in the real world. Each new situation creates unique neuronal pathways in our brain. Since we assume, in addition, up- as well as downward-causation between brain (as the biological organ) and mind (the action executed by the organ), cognitive structures evolve (a) non-repetitive and (b) in self-restructuring manner.

Memories form by weaving subjective and objective information into the fabric of an autobiographical narrative. To claim, counter-factually, that narratives are still somehow ‘computed’ by an infinite number of interconnected internal and external processes, misses the point that there is no single algorithm, or program, that can account for a genesis of mind. The dismissal of this argument is by infinite regress.

The ‘Emotional Intelligence’ and Body Argument

Ray Kurzweil is well-aware that ‘intelligence’ cannot evolve in abstraction. This is why he emphasizes the importance of ’emotional intelligence’ for strong AI, to which there are at least two objections. The first is objection is that there cannot be emotions when there is no physical body to evoke them from, only software. Computational cognition lacks semantics without the information provided by an embedded, existential ontology, which implies existential vulnerability. The second objection is that the concept of emotional intelligence itself is a good example of deeply flawed pop-psychology. There is no compelling evidence in the field of psychology that emotional intelligence exists and could be validated as a scientific concept.

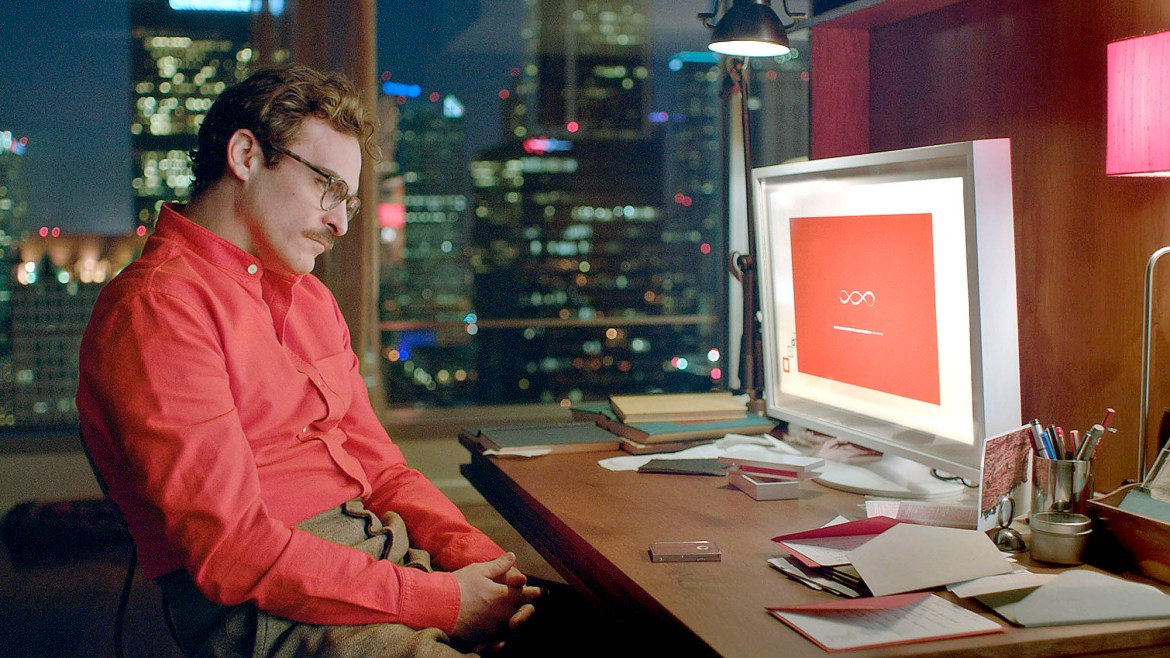

Above: The movie HER (2013), directed by Spike Jonze, explores the human need for companionship. The main protagonist, Theordore (Joaquin Phoenix) falls in love with an AI, Samantha, who eventually outgrows the relationship with her human partner. As a body-less entity, she develops the ability to establish loving relationships with hundreds of users simultaneously and after an upgrade, a liking for other operating systems which are more similar to herself.

The Multimodal Argument – The Flexibility of Mind

What scientists seem to ignore in the debate about AI is that the human mind can switch between entirely different mental modes, some of which are likely to be more computational (like calculating costs and benefits) and some appear to be less – or not at all computational (such as reflecting on the meaning and quality of experiences and the value of specific goals). The human mind can effortlessly switch between subjective, objective and inter-subjective modes of operation and perspectives. We can see things from the inside out or from outside in. In mental simulation, we can reverse assumptions of causation, which is our reality check. As a result of this flexibility, we have developed a plethora of mind-states involving imagination, heuristics, the ability to hold and detect false beliefs or to distinguish between illusion and true states. It is because we make mistakes, and because of the experience how painful these mistakes can be, that mental self-monitoring and forethought derive meaning. The multimodal argument rests on the assumption that an entity is capable of conscious experience, bringing us to the qualia argument.

The Qualia Argument

In the Philosophy of Mind, qualia is conceptualized as our subjective, experiencing consciousness. We could argue with Daniel Kahneman that this includes concluding memories based on those experiences (the experiencing- versus the memorizing Self). In the Mary’s Room thought-experiment, philosopher Frank Jackson demonstrates the non-physical properties of mental states which philosopher David Chalmers calls the ‘hard problem of consciousness‘, our inability to explain how and why we have qualia.

The thought experiment is as follows: Mary lives her entire life in a room devoid of color—she has never directly experienced color in her entire life, though she is capable of it. Through black-and-white books and other media, she is educated on neuroscience to the point where she becomes an expert on the subject. Mary learns everything there is to know about the perception of color in the brain, as well as the physical facts about how light works in order to create the different color wavelengths. It can be said that Mary is aware of all physical facts about color and color perception.

After Mary’s studies on colour perception in the brain are complete, she exits the room and experiences, for the very first time, direct colour perception. She sees the colour red for the very first time, and learns something new about it — namely, what red looks like.

Jackson concluded that if physicalism is true, Mary ought to have gained total knowledge about color perception by examining the physical world. But since there is something she learns when she leaves the room, then physicalism must be false.

An AI may, in the same manner as Mary, collect information about human interaction and emotions by learning how to read pattern based on programmed algorithms, but it will never be able to experience them. This could be considered a philosophical argument against strong AI (or supporting weak AI to assist us by synthesizing and applying useful information). Linking the multimodal- to the qualia argument states that if the realization of qualia, as a prerequisite, cannot be achieved by machine-learning, subsequent multimodal mental operations can also not be performed by AI.

Anthropomorphized Technology: AI, Gender and Social Attitudes

The question posed in a title by science fiction author Philip K. Dick ‘Do Androids Dream of Electric Sheep?’ could be answered, from what has been elaborated, in many ways: (a) Yes, if androids have been programmed to do so (b) Not really, but Turing-wise their dreams seem convincingly real or (c) No, because machines are fundamentally incapable of sentience and self-cognition.

As a big fan of thought-experiments, I enjoyed movies such as ‘Chappie‘, ‘HER‘ or ‘Ex Machina’ thoroughly. A common theme running through all of the stories is the inability of an AI to truly connect to a human understanding of life. Another dominant theme, rather sadly, is the sexual and erotic exploitation of AI by men for the fulfillment of their fantasies (not elaborating on Japanese robot girls here, which is a cultural chapter by itself). It is unlikely that intelligent AI appears anytime soon when all that people can think of is satisfying their most primal urges by creating digital sex slaves, or creating collaborating criminals as elaborated in the movie ‘Chappie’ (2015).

Above: The sexualization of AI to pass the Turing test is a theme in the movie ‘Ex Machine’ (2015) by Alex Garland. Another, more humorous example would be the figure of Giggolo Joe, played by Jude Law, a male prostitute ‘Mecha’ (robot) programmed with the ability to mimic love in Spielberg’s ‘AI’ (2001).

The two most commonly quoted arguments to why most AI are formatted female are that (a) lone male programmers who work on AI create de facto their virtual girlfriends as a compensatory reaction to their social deprivation and (b) men and women find a female AI equally less intimidating and more pleasant to interact with as compared to male AI. It is revealing how we anthropomorphize technology (as we have, e.g., anthopomorphized Gods), which is worthy of a separate inquiry.

Beyond the obsession with creating artificial intelligence, how about creating artificial kindness, artificial respect, artificial understanding or artificial empathy? We could distribute these qualities among those humans who dearly lack them.

Summary

As weak AI continues to develop, prospects for the advent of strong AI remain in the realm of science fiction. There are compelling arguments that singularity will not emerge anytime soon and may, in fact, never realize. One of the key arguments is that biological, digital and quantum systems are based on fundamentally different types of causation. They are not identical and require technological translation. Weak AI can be understood, in this light, as the mediation between human consciousness and information processing in order to serve human needs and goals. The verdict if strong AI is possible, or not, is still out. We may hypothesize that as synthetic biology advances, AI could one day be given the gift of embodiment, vulnerability and replication. We are not at the end of sci-fi yet and we cannot fully predict the interconnected effects of new digital, quantum-based and biological breakthroughs.

Digital assistants and service robots have already become useful and self-optimizing extensions of our social life. As for all technology, AI is subject to potential abuse since the ethics of goal-setting , for the better or worse, remain still a unique quality of fallible programmers within the open domain of human imagination.

An interesting blog, however I am a little surprised that you didn’t include the ‘non-scientific” perspective that I know you are quite familiar with. For example, you said, “Our minds, as emergent properties of the brain…” But from the ‘spiritual perspective’ the brain is the embodiment of the emergent properties of the mind. I know this might seem like a small detail, but if it is true that the entire physical universe is really the outcome of mind working through the subtle sphere of energy, then does this not seem to shine an entirely different light on your argument?

Hallo Michael, thank you for your comment. You are correct that the Blog-entry takes the scientific perspective. My ‘spiritual’ take would be closer to Spinoza who claimed that the universe can explain itself – out of itself. I believe that this is what the mind actually does, explaining the universe and by doing so, explaining itself. The second ‘spiritual’ fact, if we could call it this, is the downward causation of mind to brain (e.g., by meditation or in fact any intentional acts), this is that the mind is not just a function of the brain, but both work in interaction. As a scientist my interpretation on spiritual interpretation is admittedly limited since it is easy to drift into speculation. But from a monoistic and more philosophical view I agree with Spinoza: whether to understand by mind (subjectively) or by matter (objectively) are simply different ways on how to look at the same reality. I hope this interpretation clarifies how I would define the spiritual side of mind-body monoism. Kindest regards!